How much should you trust AI? — Tip #17

You already know the answer 🤖

I’m amazed at how many friends and people in my extended network are relying on AI to do everything in their lives. What used to be a simple Google search is now often just reading the AI extract at the top of the page. Most non‑tech people are treating it almost religiously, as an infallible machine, but the funny part is that it’s the opposite of what these models actually do.

I was recently building an alternative to Loom a tool that lets you seamlessly record your desktop and add your beautiful face to the video. It’s used for tutorials, sales presentations and the like.

While building it, I realized the player wasn’t showing the video duration. I found that frustrating, because you don’t really know how long the video will play. So I decided to ask AI to integrate that part. Here’s what I asked:

In the player display the total time of the video so that user can see how much is left

Here is the before 👇

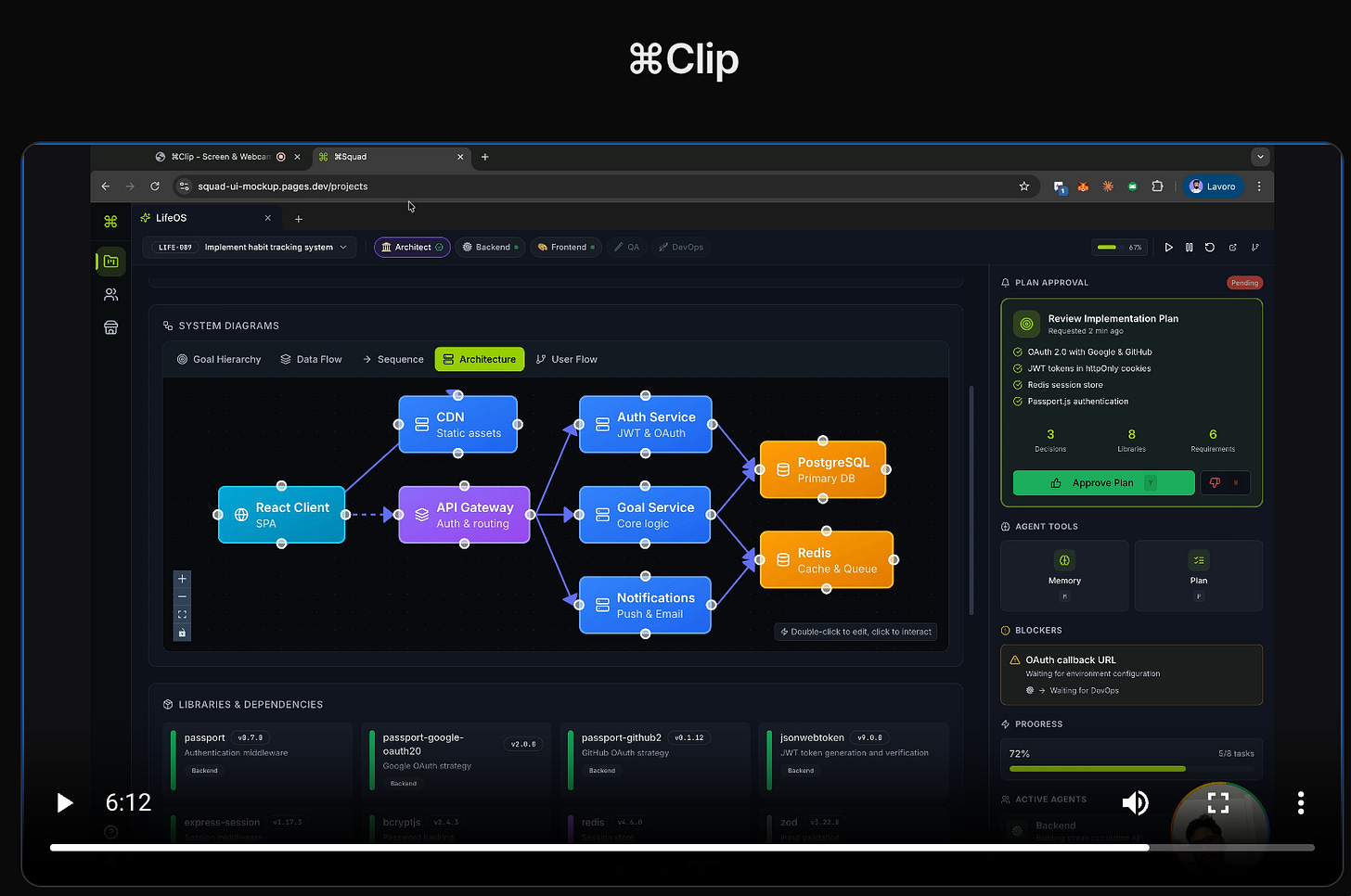

And here is the after 👇

Do you notice anything different? We finally have the duration in place, but we also have a purple color that popped up, the font has changed, we no longer see the three dots next to the fullscreen button, and the control buttons are now below instead of above. I didn’t request any of these changes 🤷♂️.

Why did this happen?

㊙️ Lack of meaning: AI doesn’t really understand what I asked. It breaks the text down into tokens and generates the most likely continuation given its training and the provided context.

🎲 Stochastic model: AI is not deterministic. If I send the same prompt again, next time it might turn the bar red because it has “seen it” on YouTube, or it might add the duration like I asked without any extra changes. You can’t predict exactly what you’ll get back.

🧑🏫 Assertive: To keep you engaged, AI often gives answers confidently, without expressing doubt, so you don’t question its authority and instead feel like you’re working with an expert that knows everything.

So when I asked it to add the duration, it parsed the request, “reconstructed” the surrounding code, and gave me the closest answer it could. Keep in mind: even when you ask “sum 2 + 2” AI doesn’t understand the question, it has simply learned that the pattern 2 + 2 is typically followed by 4.

Of course, the companies building these models know their weaknesses and work hard to reduce them, for example, by making certain associations more deterministic so you don’t start doubting whether these models are actually intelligent. Despite being useful, they are not actually “smart”. A bit like the smartphone (see what I did there? Smart isn’t it?!).

Your weekly tip: As you use more and more AI in your life, remember that the underlying models are non‑deterministic. They don’t really understand what you mean. It feels like magic, but it’s not: they’re just very accurate prediction machines. Don’t throw your brain away yet 👉 it’ll be handy the next time a prediction goes off the rails.

Don’t forget to share this tip with a friend who’s addicted to AI, maybe they can use it with a bit more awareness 🫰

See you next week, until then…

Don’t Panic 😱

“I was recently building an alternative to Loom”

Do share 👀

I wish the general population understood this… It’s baffling to me when I see people I know assume with full certainty that what ChatGPT tells them is absolute truth—even thought right there in the chat window it says “ChatGPT can make mistakes. Check important info.”!!

(╯°□°)╯︵ ┻━┻

They have no mental model of how the model creates the responses they see. They don’t understand how it’s possible to generate text without “understanding” it. Some even think LLMs are conscious simply because they output letters instead of numbers…

They seem to get it when I explain 1:1, but that doesn’t scale. At minimum, there should be PSAs in schools, on the TV, in online ads, etc.

And look, I get using something and not caring much to understand its inner workings. I used the internet as a pre-teen for many years without looking up how to works. Let alone electricity! In my defense though, those things were invented before I was born—I was raised in an environment where they were always a given. But LLMs sprung up during our lifetime, right in front of our eyes, yet most just took the news without awe, without curiosity, without any thinking…